Please join us for the October installment of the 2018 Digital Futures Discovery Series, a year-long program led by Harvard's Digital Futures Consortium that explores the ongoing transformation of scholarship through innovative technology.

A major task for museum staff is to describe objects in their collections. These descriptions tend to be factual and typically answer the who, what, when, where, how, and why questions about an object. Very rarely do these descriptions tell you what is actually depicted.

There are two main reasons why this is the case: it can be quite time consuming to describe everything you see in an object, and it is highly subjective.

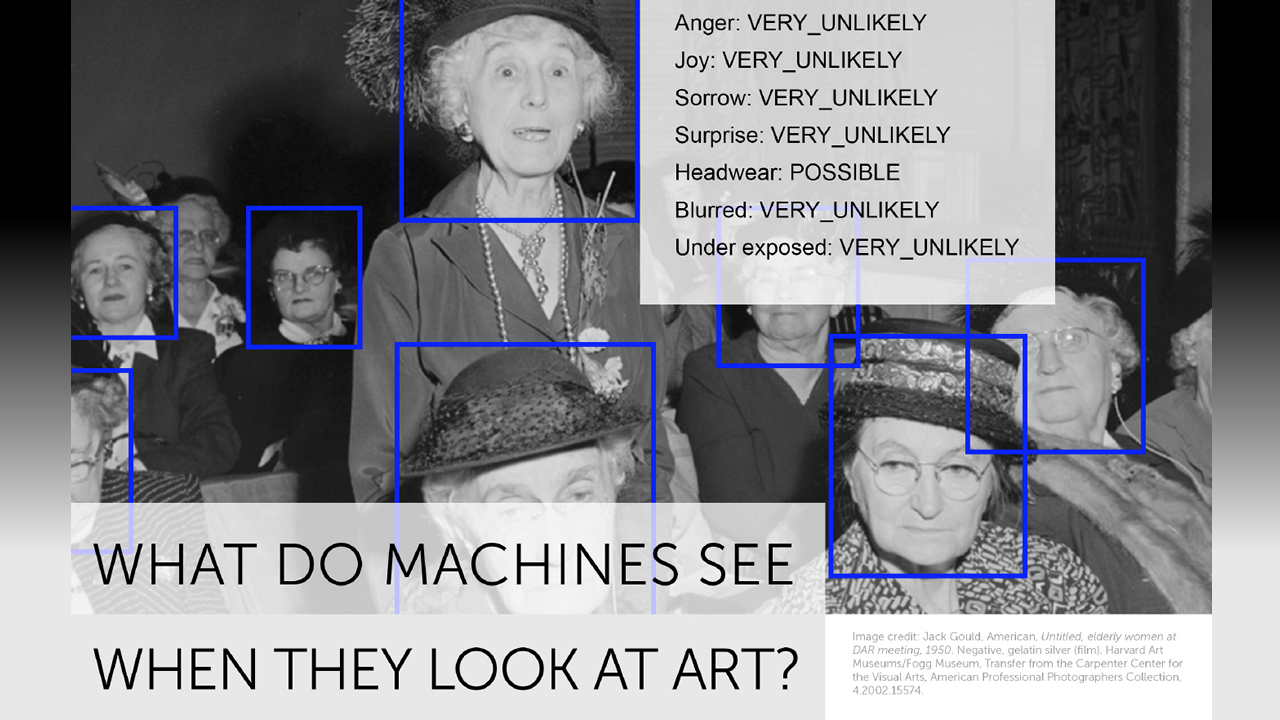

So what if we defer to a computer, and ask a few computer vision services to look at – and describe – the museum’s art objects?

For the past two years the Harvard Art Museums staff have been doing just this. They’ve been exploring alternative methods for describing the museum’s collections using machine processing and computer vision.

Jeff Steward, the director of the department of Digital Infrastructure and Emerging Technology, will discuss this project, share what’s been learned, and where we’re headed with it.

Jeff Steward is director of digital infrastructure and emerging technologies (DIET) at the Harvard Art Museums, exploring and leading the museums on the use of a wide range of digital technology. He oversees the collections database, API, and photography studio. For the opening of the new Harvard Art Museums in November 2014, he helped launch the Lightbox Gallery, a public research and development space. Steward has worked at museums with museum data since 1999. Areas of research include visualization of cultural datasets; open access to metadata and multimedia material; and data interoperability and sustainability.